By Olimpia Migliore, DVN Interior Consultant

In-vehicle voice assistance systems were a highlight of CES this year, with several tech companies introducing their innovations. SoundHound unveiled the first ever in-vehicle voice commerce ecosystem, Honda and Sony Honda Mobility introduced the Afeela 1 with an interactive AI voice agent, BMW showed their Intelligent Personal Assistant powered by Alexa Custom Assistant, and most automakers and suppliers are racing to offer the customer the ultimate experience in voice-controlled HMI.

Let’s look at how voice-controlled HMI has been evolving, what challenges remain, and how AI is accelerating the development of such systems.

A bit of voice-assistant history

In the 1990s, early speech recognition technology led to the creation of basic in-car voice control systems, allowing limited commands like phone calls and climate control, but with low accuracy and high user frustration. The 2000s saw significant advancements, notably in 2004 with Honda’s voice-controlled navigation system and Ford’s SYNC in 2007, which expanded and improved voice control for phone calls, media, and more.

The 2010s marked a transformative shift with the introduction of smartphone assistants like Siri and Google Assistant, which car manufacturers began integrating into vehicles around 2015. By the late 2010s, improvements in NLP (natural language processing) allowed more versatile interactions.

Today’s in-car voice assistants are focused on personalization and connectivity. They learn user preferences and integrate with smart home systems. The real revolution in the development of voice assistant systems has been touched off by AI-powered systems.

HMI basics

An interesting study, Application of Voice Interaction in Automotive Human Machine Interface Experience Design (by Huang & Huang of Huazhong University of Science and Technology Wuhan) suggests the future of HMI design should focus on creating a seamless, scenario-basedsystem that links various functions and minimizes driver distraction .

HMI, in a sense, can be interpreted as a series of driving tasks. The primary tasks in driving involve car control and monitoring road hazards, while secondary tasks like radio control or phone dialing demand more visual attention. The key difference between driving tasks lies in the degree of visual and manual interaction required. From a safety and accessibility standpoint, tasks that rely mainly on manual operations are considered safer because they minimize visual distraction. However, the rise of infotainment systems in cars has led to an increase in visual-oriented tasks, making the in-car information system more complex. The challenge for automotive HMI design is to balance providing better interactive experience with ensuring safety within this increasingly complex system.

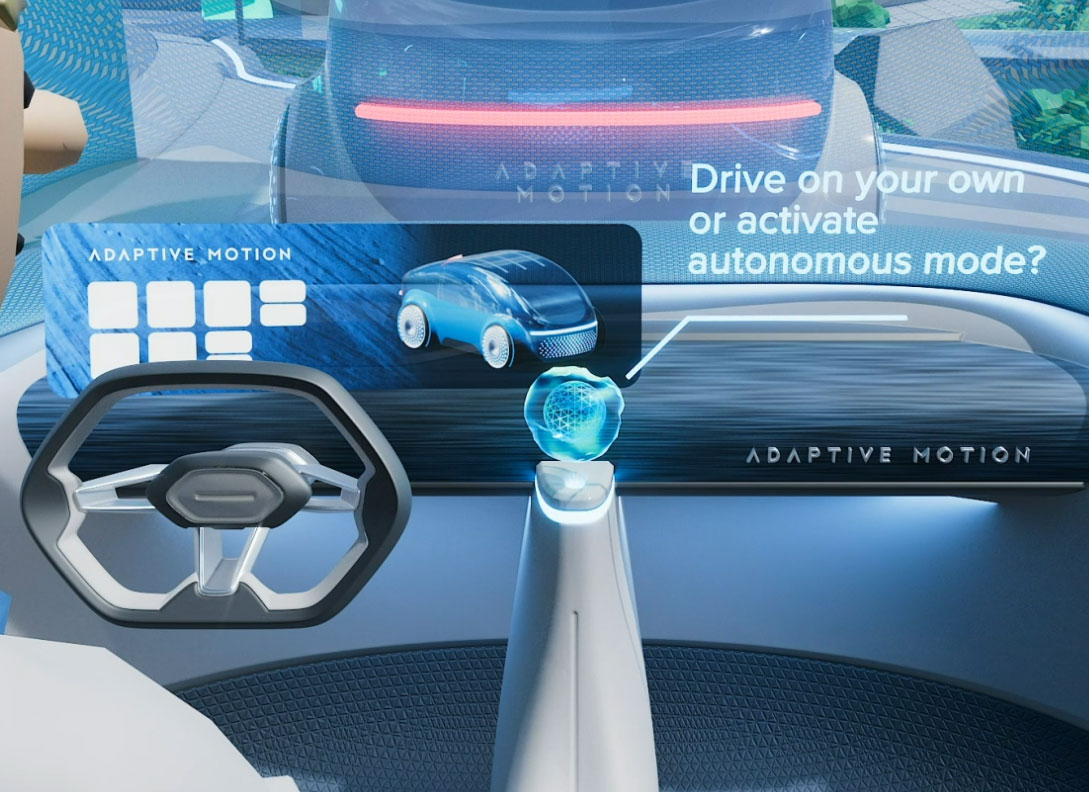

Scenario-based HMI

HMI design based on scenario tasks involves the application and cooperation of different interactive channels. A certain interactive channel can be used as a main channel, combining with another interactive channel, such as voice + gesture or voice + button. Multichannel interactive interface integrates voice interaction, touch screen interaction, space somatosensory interaction, eye movement interaction, and other interactive modes. It provides feedback to the user through multiple sensory channels, to provide more intuitive and natural interaction. It reduces the burden of excessive visual and auditory information processing during driving, and balances the information to avoid overloading any one sensory channel.

Voice-activated HMI

Appropriate technology allows to increase efficiency and ease of use, particularly through voice interaction, which is seen as a key technology in the IOT era and has created a brand-new scenario. Voice interaction can provide simple, accurate, and safe operations without distracting the driver. It helps people communicate with machines in a natural chat mode, without navingating a wall of buttons or reading and scrolling endless menus and sub-sub-submenus on touchscreens, the unsafety of which is a decreasingly-secret open secret. The machine, for its part, is configured to listen and speak, and to create an increasingly convincing simulation of understanding and thinking.

Usability principles and design elements

As the University of Wuhan study highlights, there are some important factor to consider in designing voice interaction for HMI applications. These include:

- The application determining the content and form of the dialogue between people. A detailed description of all roles, situations and form of human machine information exchange in the process of driving.

- Clarify the mapping functions and operational tasks. When users face different task goals, voice design is the process of constantly understanding the semantic function or operating task semantics, the form and expression of voice interaction.

- Understanding the users and problem domain. Psychological model and emotional state of the user should be understood as much as possible. The feedback after the operation must meet the expectation of the user.

There are also issues related to testing and setting up voice assistants, such as:

- The acoustic environment can be affected by vehicle speed, number of occupants, HVAC settings and other audio sources.

- Road noise is a significant factor in the performance of voice control systems, as well as other sources such as transmission and engine vibrations.

Most of these problems can be overcome by using a library of pre-recorded audio speech files collected from human subjects in a controlled audio environment and at different speeds.

Automation testing platforms can shorten development cycles and increase product quality. For example, Nextgen’s ATAM Connect solution can select test files and check the voice assistant output response against each input command. The system tests connected products using standard HMI user interfaces, just like the end user, improving the end user experience.

VUI (voice user interfaces)

Voice-controlled systems could not exist without voice user interfaces, which enable user to interact with their in-car voice assistant.

StarSenior Conversational UX Designer Elisabeth Juergenssays her company’s « expertise and extensive training in both design and linguistics allow us to create in-car digital assistants that are functional, intuitive and empathetic. We believe that every interaction between the driver and their in-car assistant should feel as natural as conversing with a human companion, without the need for drivers adjusting their speech patterns ».

VUI, to be successful, needs to be human-centric. They must be able to recognize nuances of human speech, including accents, dialects, and emotional cues. They need to capture mood and intentions, to adapt to personality and cultural background of the user.

The role of generative AI

Most of the latest voice assistant systems have an AI element, which enables more natural, personalized, and lifelike interactions between a driver and a vehicle. AI helps to address the cultural sensitivity issues, which was a major shortcoming of previous systems without AI.

Star is a digital-solutions company. Their experts emphasize that AI voice assistants can be continuously updated throughout the life of a vehicle, something not possible with physical controls and possible only in a limited way with touchscreen interfaces. Moreover, AI VUI can collect passenger data to customize the system according to user preferences and behaviors, and can create additional revenue streams. This last point raises invasion-of-privacy concerns, best addressed by the automaker.

AI models are not risk-free, of course. They can amplify biases in the data—and there are many—which can offend users. Too much of that can damage an automaker’s brand image, which is a factor to consider when deciding whether to develop a proprietary solution or farm it out.

Introducing third-party platforms can expedite development time and take advantage of established ecosystems, offering users familiar interfaces and functionalities. But some companies prefer to develop their own system, reinforcing their brand identity—Mercedes and MINI are examples with built in-car OS integrated in their infotainment ecosystem. Other makers, like Volvo, Polestar, and Renault, are using Android Automotive OS, benefiting from its well-known and extensive app ecosystem.

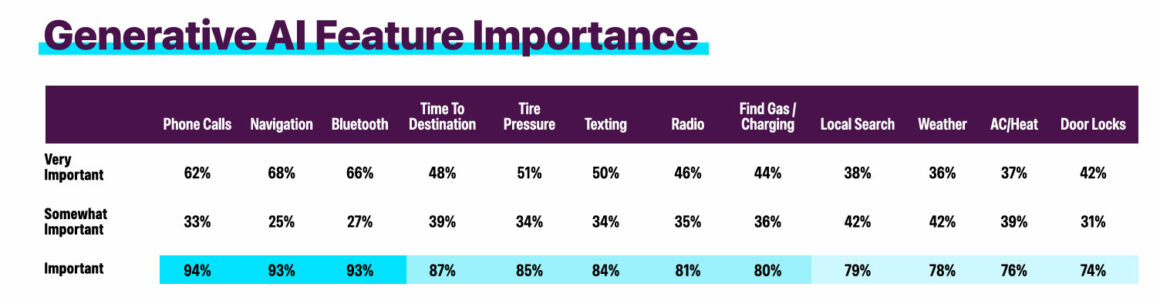

AI-powered systems can do things traditional voice assistants can’t, including:

- work with complex assignments (« find me a restaurant offering fusion food with parking and outdoor seating »)

- use real-time data to dynamically adjust routes, considering factors like traffic, weather, and personal preferences

- monitor vehicle systems and provide notification about them

- program charging-safe routes for EVs and book charging stations

- adjust the in-car light and sound environment to suit the system’s interpretation of driver and passenger mood

- collect data from the DMS and adjust the in-cabin conditions to support the driver

- Provide 24/7 support to customers

…and more to come soon.

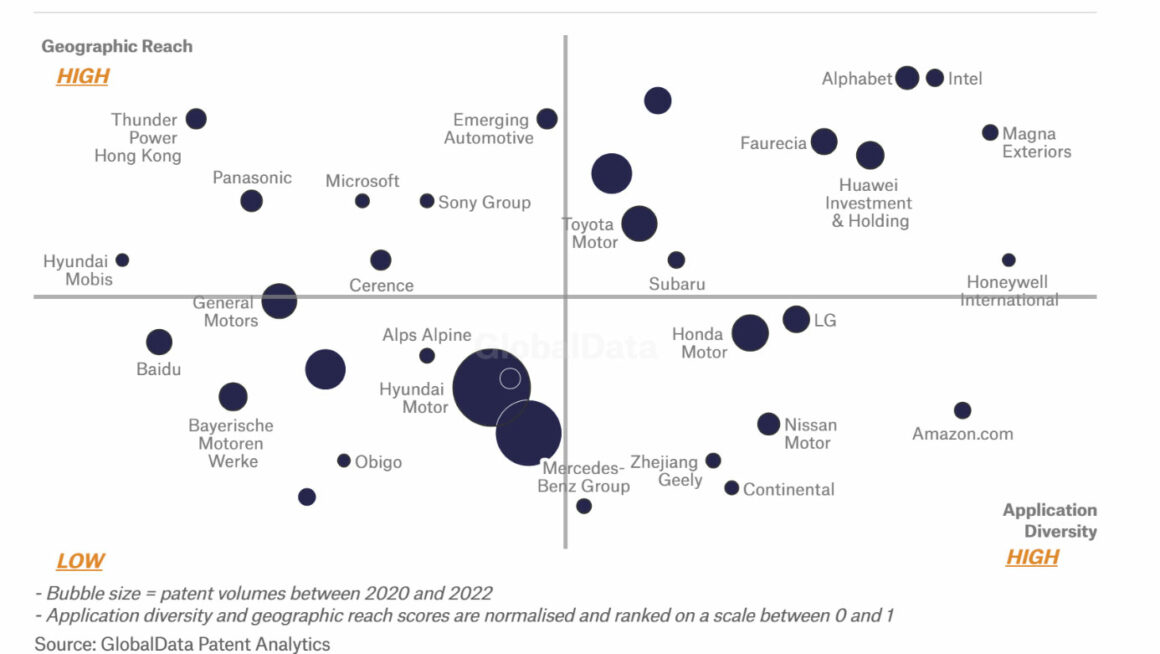

The market is dominated by tech giants introducing generative AI voice assistants which, by dint of the large database available to the tech giants, automakers are struggling to match.

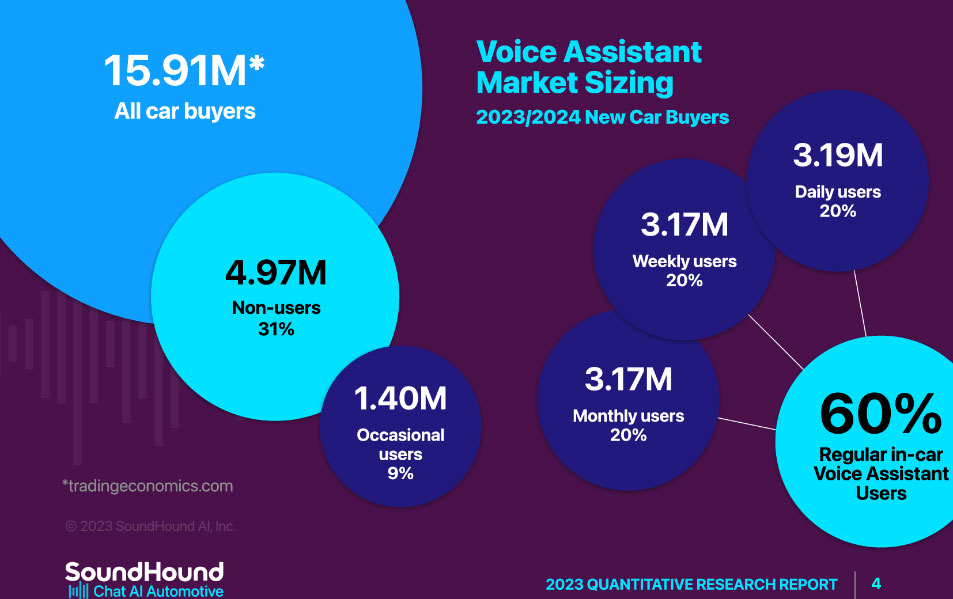

SoundHound

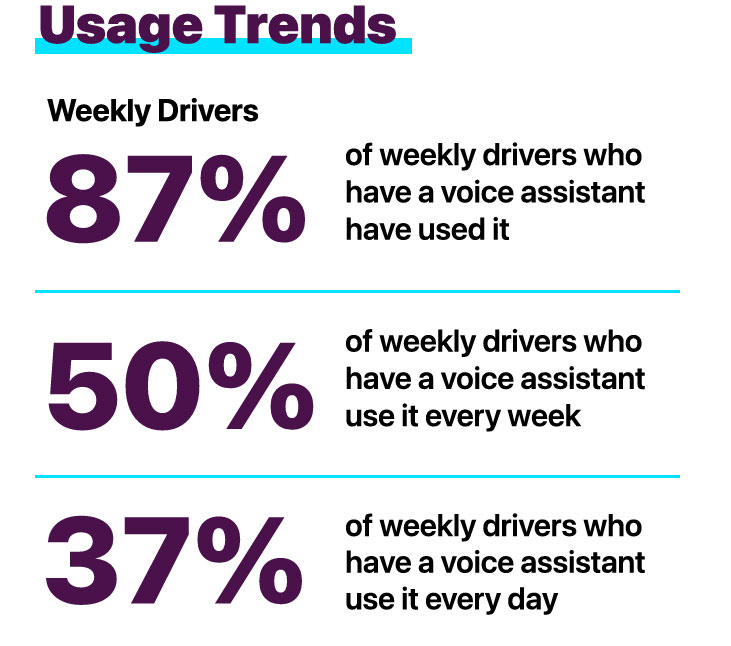

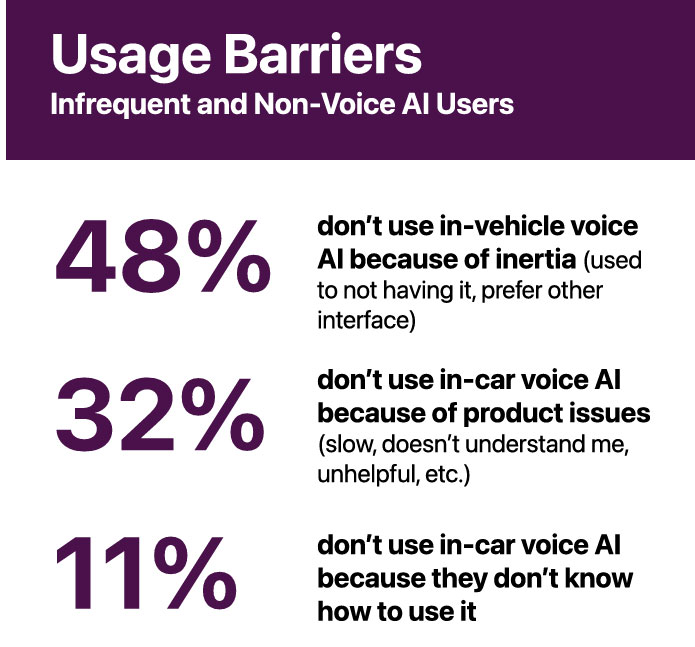

SoundHound, a major leader in voice assistant technology, showed at CES 2025 the first ever in-vehicle voice commerce platform. It will let drivers and passengers place an order and make payments seamlessly, and then navigate to the nearest pickup location, all directly from a car’s infotainment system and completely hands-free. SoundHound has also published a white paper with insights on market data for voice assistants, confirming that this technology is becoming state-of-the-art in every vehicle class.

Apple’s Siri and Google Assistant, already integrated through CarPlay and Android Auto (respectively), are becoming more sophisticated, using AI to parse driver commands, deliver naturalistic speech responses, and seamlessly connect with broader ecosystems such as smart homes. With access to virtually infinite consumer data like location, calendars, and browsing history, Apple and Google can offer deeply personalized and powerful user experiences.

Cerence‘s CaLLM (Cerence Automotive Large Language Model) and CaLLM Edge are proprietary automotive large and small language models.

TomTom and Microsoft have developed a fully integrated, AI-powered conversational automotive assistant that enables more sophisticated voice interaction with infotainment, location search, and vehicle command systems.

Amazon and BMW showed a voice assistant combining Alexa with large language models (LLMs) and vehicle-relevant data. BMW powers their new Intelligent Personal Assistant with Alexa Custom Assistant.

Proprietary systems

In terms of patents registered, Hyundai-Kia has the most patents filed in this field, followed by Ford, Porsche, and GM.

Examples from CES 2025

SoundHound and Lucid’s New Voice Assistant

Lucid launched the Lucid Assistant, developed in partnership with SoundHound, which leverages generative AI technology for a hands-free drive experience.

The new voice assistant is powered by SoundHound Chat , the voice platform that was the first into full production with a voice assistant that integrates the latest generative AI technology. This integration will give drivers access to a voice assistant with interactive knowledge discovery, real-time data, and effortless in-vehicle controls.

Now live and available to Lucid Air owners, the Lucid Assistant responds to the wake words “Hey Lucid”. Drivers and passengers can ask questions in a natural and conversational way to receive fast, accurate responses through SoundHound’s technology. This technology ensures that the assistant selects the correct response from the most appropriate domain—whether that’s an answer powered by generative AI, or real-time questions about weather, sports, stocks and more.

The voice assistant lets users access Lucid’s full car manual and can provide answers to almost any question about the vehicle. Drivers can also use voice to control features such as navigation, and many of the Lucid Assistant features and functions can also be accessed without needing a cellular connection.

When processing queries, the SoundHound system uses a proprietary approach which the makers claim massively reduces the risk of AI hallucinations—misleading, wrong, and unpredictable responses which are a real problem with LLMs. The assistant is available in English, Spanish, French, Arabic, German, and Dutch, with additional languages coming soon.

Far-Field Voice Capture

Voice control is an enduring quest, looking for hands-free functionality, good for both safety and convenience. Far-field voice capture allows drivers to interact with in-car systems for navigation, phone calls, or media control without taking their hands off the wheel or eyes off the road.

In this context, far-field technology’s ability to distinguish between the driver’s voice and other background sounds, such as road noise, music, or conversations among passengers, becomes essential. This enhances the reliability and responsiveness of voice assistants. Ark Electronics USA has created Ark X Laboratories to deliver voice experience to the market. Their next generation of advanced, high performance far-field voice capture solutions, featuring Cirrus Logic, Sensory and NXP technologies, are Amazon pre-qualified and production-ready. This provides voice-enabled IoT products and smart devices.